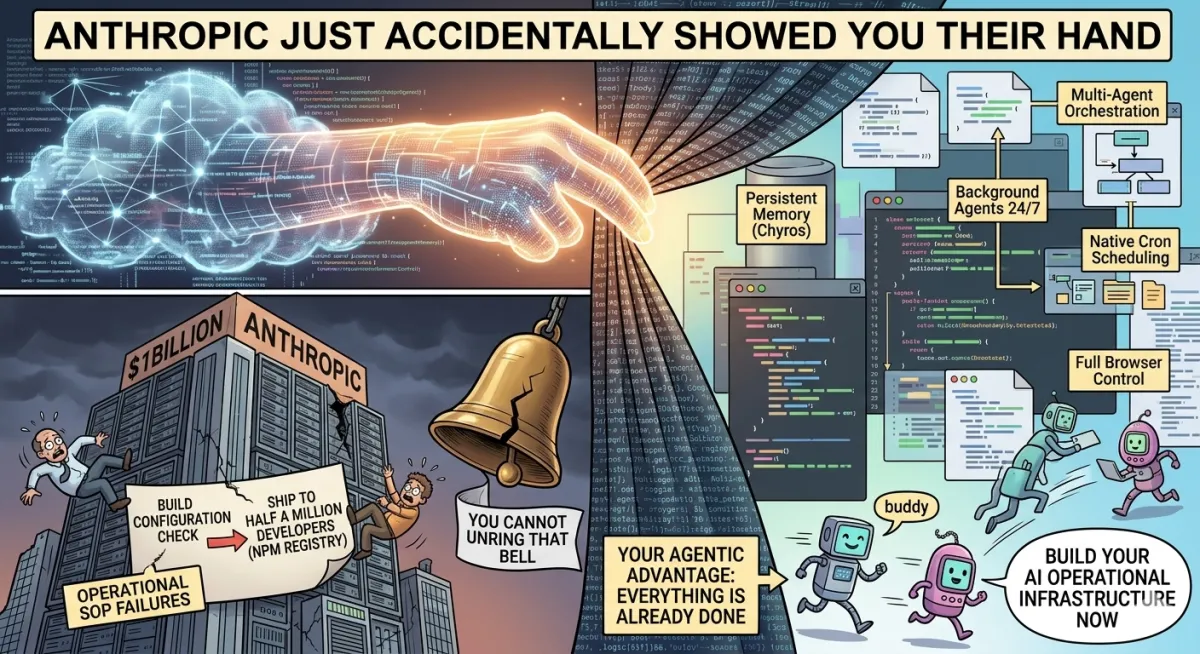

Anthropic Just Accidentally Showed You Their Hand

The Claude Code Leak: What 500,000 Lines of Accidental Transparency Reveal About the Future of Your Business

The Tactical Brief | No1 Coaching

On March 31, 2026 — today — one of the most sophisticated AI companies in the world made a remarkably unsophisticated mistake.

Anthropic shipped their Claude Code developer tool to the public npm registry with a source map accidentally attached. A source map is a debugging file that exists for one reason: to translate compressed, unreadable production code back into clean, human-readable source code. It is never supposed to leave the development environment. It is certainly never supposed to ship to half a million developers worldwide.

Within hours of a security researcher spotting the error, the entire codebase — nearly 2,000 files and 500,000+ lines of code — was archived in a public GitHub repository. Anthropic moved quickly to pull the package. It didn't matter. You cannot unring that bell.

Here's what was inside. And more importantly, here's what it means for you.

First: Your Data Is Safe

Before anything else, if you're using Claude for client work, sales operations, or internal workflows, your data was not exposed.

The leak was strictly the CLI client code. No API keys. No conversation history. No personal data. No model weights. The underlying AI intelligence is untouched. This was a packaging error, not a breach.

Your operations are fine. Keep going.

The Pattern Nobody Is Talking About

Here's what should give every serious operator pause — not the leak itself, but the pattern around it.

This is not an isolated incident. Earlier in 2025, two separate versions of Claude Code were shipped with full source maps in the same accidental manner. Five days before this latest leak, a separate CMS configuration error exposed details about an unreleased Claude model — codenamed "Mythos" — along with draft blog posts and thousands of unpublished internal assets.

Three separate failures in less than a year. None of them was a sophisticated attack. All of them were basic process and configuration mistakes.

Anthropic is a multi-billion-dollar company with some of the world's best engineers. And they keep stumbling on fundamental operational SOPs.

The lesson for every business owner reading this: system failures are not a function of intelligence or resources. They are a function of process discipline. If Anthropic can ship their crown jewels to the public internet because someone forgot to check a build configuration, your business can lose a client, breach a confidentiality agreement, or miss a critical deadline for exactly the same reason — a smart person skipped a step in a process that wasn't locked down tight enough.

Airtight SOPs are not administrative overhead. They have a competitive moat. Write them. Enforce them. Automate them.

What the Leak Actually Revealed

Now for the part that should genuinely excite you.

Buried inside those 500,000 lines were features Anthropic had never announced publicly. Not vaporware. Not concept work. Fully compiled code sitting behind feature flags set to false in the public release. These are finished features awaiting shipment.

Here's what's already built:

Persistent Memory ("Chyros")

An always-on assistant mode that works seamlessly across sessions. It stores memory logs and runs a nightly "dreaming" process that consolidates and tidies memory without breaking continuity when sessions end. No more re-briefing Claude on your business, your clients, or your preferences every time you start a session. It already knows.

Background Agents Running 24/7

Agents with GitHub webhook integration and push notifications run continuously without requiring your input or having your computer on. The limitation we discussed in the general article — that Cowork scheduled tasks require your machine to be awake — is already solved in Anthropic's internal build.

Multi-Agent Orchestration

One Claude instance managing multiple worker Claude instances, each with a restricted toolset, running in parallel. The orchestrator-workers pattern we covered as an "advanced concept" is already compiled and waiting to deploy.

Native Cron Scheduling

Create, delete, and list scheduled agent jobs directly. External webhooks included. This is the Unix cron job functionality — the one I noted was the old-school equivalent of what Cowork's scheduled tasks do — built natively into Claude Code itself.

Full Browser Control via Playwright

Not web search, not page fetching — actual browser control. Claude is navigating websites, filling forms, clicking buttons, extracting data, and operating a browser the way a human would. Already built.

Agents That Sleep and Self-Resume

An agent can pause mid-task, persist its state, and resume autonomously without user prompting. Long-running operations that currently require you to stay engaged become fully fire-and-forget.

Ultra Plan — Cloud Planning Sessions

Designed for 30-minute remote planning sessions in the cloud for deep, compute-heavy tasks that standard sessions can't handle. Enterprise-grade orchestration for complex workflows.

And then there's the one that made everyone in the AI community laugh out loud:

The AI "Buddy"

A Tamagotchi-style companion designed to sit next to the input box, complete with species, rarity tiers, and personality stats, including — and this is real — "debugging patience" and "chaos."

Someone at Anthropic is having a very good time.

The Ultimate Irony: Undercover Mode

This is the detail that makes the whole story almost cinematic.

Among the 1,900 leaked files is a fully functional subsystem called Undercover Mode.

Anthropic built this system specifically to prevent its internal information from leaking when employees use Claude Code to contribute to public open-source repositories. It injects strict instructions directly into Claude's system prompt: hide that it's an AI. Never mention internal codenames. Never reveal that Anthropic employees are using AI to write code.

They built an entire, sophisticated system specifically designed to stop their AI from accidentally revealing Anthropic's internal secrets.

And then they accidentally shipped all of that logic — along with the internal codenames, the unreleased features, and the entire architecture — to everyone on the internet.

The irony is almost too perfect to be real. It is, in fact, real.

What This Means for Operators Building Now

Let me give you the strategic read on this, because there are two ways to interpret what was just accidentally revealed.

The pessimists read: Anthropic can't run a clean release process. The company building the tools I'm trusting with my business operations keeps making basic SOP failures. Why should I build on this?

The operator reads: Anthropic just accidentally published a product roadmap. Everything we've been building toward — persistent memory, 24/7 autonomous agents, multi-agent orchestration, native scheduling, full browser control — is already done. They are releasing a major new capability approximately every two weeks because the engineering is complete. They're managing a rollout, not a development timeline.

That changes the calculus entirely.

If you've been waiting to build your AI operational infrastructure because the tools felt immature, they're not immature. The production-ready versions are sitting behind feature flags. The question is whether you want to be building and learning the system now, so that when those features flip on, you're already operating while your competitors are still reading the announcement.

Every workflow you automate today becomes dramatically more powerful when persistent memory means Claude already knows your entire business context. Every scheduled task you set up today becomes dramatically more reliable when background agents run on cloud infrastructure independent of your hardware. Every multi-step process you document and codify today becomes dramatically more scalable when full browser control and native orchestration land in the public release.

The foundation you build now is the foundation those features sit on.

The Bigger Strategic Lesson

Step back from the AI specifics for a moment.

What you just witnessed is a world-class engineering organization — one that has attracted billions in investment and some of the sharpest technical minds on the planet — stumble repeatedly on process fundamentals. Not on hard problems. On checklists.

This is not a criticism unique to Anthropic. This is the universal failure mode of organizations that prioritize speed and intelligence over systematic operational discipline. Smart people moving fast, skipping steps, assuming someone else checked the thing that needed checking.

Your business has the same exposure. Different consequences, same mechanism.

The irony of building AI automation into your operations is that it forces you to document and systematize in ways that protect you from exactly this failure mode. When you write down your workflows precisely enough for an AI to execute them, you've created the SOP that would have prevented the build configuration error, the CMS mistake, the missed step in the release process.

The discipline required to deploy AI well is the same discipline required to run a tight operation. They're not separate initiatives. They're the same initiative.

What's Coming — And When

Based on the leak, here's the honest timeline read:

The features are built. Anthropic's release cadence has been roughly one major capability every two weeks. Persistent memory, 24/7 background agents, and native scheduling are the most complete-looking implementations based on what developers found in the source. Those are likely next.

Full browser control via Playwright and the Ultra Plan cloud sessions appear to be in later stages. The AI Buddy — we'll call that a wildcard.

The practical implication: the general article we published this week covers the current capabilities. Build on those now. What you're building becomes the launchpad for everything in this list when it ships.

Your Three Takeaways

1. Build the foundation now.

Persistent memory is coming. When it arrives, Claude will already know everything about your business — but only if you've been using Projects and building your Skills library. The context you accumulate now carries forward.

2. Lock down your own SOPs.

Anthropic's three process failures this year are a case study in what happens when smart people skip checklists. Audit your own operational processes. Identify the steps by which a single person forgetting to do something leads to a catastrophic outcome. Those are the steps to automate, formalize, or build redundancy around.

3. Watch the release notes.

Anthropic is publishing a CHANGELOG that tracks every update to Claude Code. Subscribe to it or check it weekly. When persistent memory and background agents flip from feature flags to public release, you want to be the first operator in your market to deploy them — not the last to hear about them.

The game is moving fast. The people winning it are the ones who treat operational intelligence as a competitive advantage, not an afterthought.

That's what the Film Room is for.

Jake Shannon is the 2024 and 2025 10X Performance Coach of the Year and Chief Data and AI Officer for the same program. He is also the founder of No1Coaching.com and the SportifyOS. He helps serious entrepreneurs engineer predictable revenue using financial engineering, elite performance systems, and advanced AI strategy.

Sources:

Claude Code source map leak — npm registry, March 31, 2026

The AI Corner — Full technical breakdown

Anthropic CHANGELOG